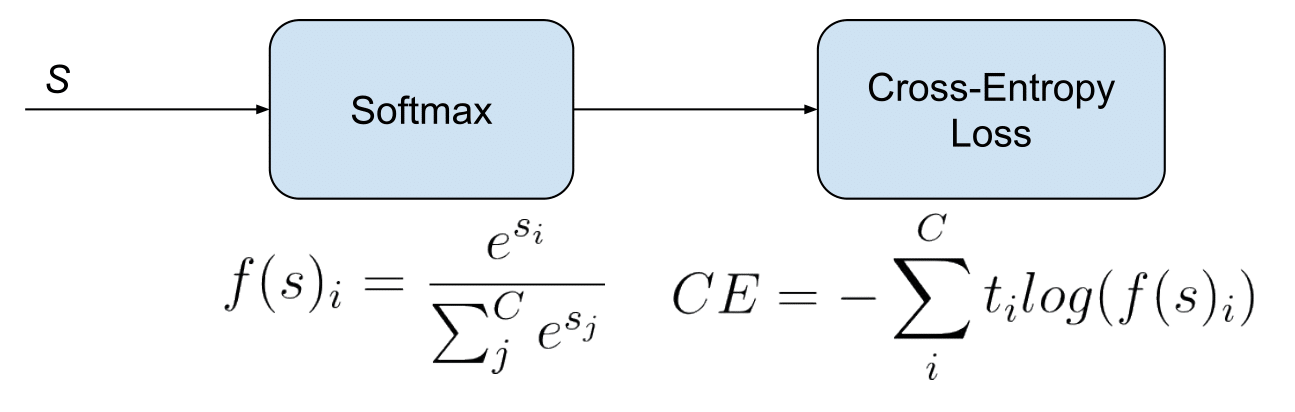

I took the data as a sample under the logit model with a slope a 0.3 a 0.3 and an intercept b 0.5 b 0.5. For this a a, we can show the evolution of cross entropy and accuracy when b b varies (on the same graph). In other words, the larger $L$ is, the less surprised person $Q$ is by the results of our die rolls. The a a minimizing the cross entropy is 1.27. The larger $H(p,q)$ is, the closer $L$ is to $1$. The combination of nn.LogSoftmax and nn.NLLLoss is equivalent to using nn.CrossEntropyLoss.This terminology is a particularity of PyTorch, as the nn.NLLoss sic computes, in fact, the cross entropy but with log probability predictions as inputs where nn.CrossEntropyLoss takes scores (sometimes called logits).Technically, nn. As well see later, the cross-entropy was specially chosen to have just this property. This cancellation is the special miracle ensured by the cross-entropy cost function.

When 0, Focal Loss is equivalent to Cross Entropy. From the experiments, 2 worked the best for the authors of the Focal Loss paper. Model As cross-entropy loss is 2.073 model Bs is 0.505. Considering 2, the loss value calculated for 0.9 comes out to be 4.5e-4 and down-weighted by a factor of 100, for 0.6 to be 3.5e-2 down-weighted by a factor of 6.25. To combat this problem, mean absolute error (MAE) has recently been proposed as a noise-robust alternative to the commonly-used categorical cross entropy (CCE). Define a "probability vector" to be a vector $p = (p_1,\ldots, p_K) \in \mathbb R^K$ whose components are nonnegative and which satisfies $\sum_^K p_k \log(q_k)$ can be used to measure the consistency of $p$ and $q$. When we use the cross-entropy, the sigma(z) term gets canceled out, and we no longer need worry about it being small. Cross-entropy loss is the sum of the negative logarithm of predicted probabilities of each student.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed